In 2024, Samsung engineers leaked proprietary semiconductor source code and internal meeting notes through ChatGPT in three separate incidents within weeks of lifting the company's ban on the tool. The same year, Cyberhaven found that 27.4% of data entered into AI tools at work was sensitive, including source code, customer data, and HR records. Meanwhile, 60% of enterprises reported AI cost overruns exceeding initial estimates by 20% or more. These are not AI failures. They are governance failures. The AI works fine. The organizations have no system for controlling who uses it, what data enters it, how much it costs, or whether anyone is watching. This guide provides a practical framework for mid-to-large enterprises to govern AI across six domains: access control, cost management, credential security, model management, observability, and supporting infrastructure.

The Governance Gap

The numbers paint a clear picture. ISACA's 2024 AI Readiness Survey found that only 15% of organizations had comprehensive AI governance. 35% had partial governance. 50% had minimal or no formal framework at all. At the same time, adoption is accelerating. McKinsey's 2024 Global Survey reported that 72% of organizations used AI in at least one business function, up from 50% the year before.

The gap between adoption speed and governance maturity is the central risk. Microsoft's Work Trend Index found that 78% of AI users brought their own tools to work. 52% were reluctant to admit using AI for important tasks. Salesforce reported that 55% of enterprise AI use was unmanaged by IT. Forrester found that 67% of organizations could not produce a complete list of AI models in use when asked.

This is not a technology problem. Enterprises have dealt with similar patterns before: shadow IT, cloud sprawl, SaaS proliferation. The playbook exists. What is new is the speed. AI tools are cheaper, easier to adopt, and more capable than any prior wave of enterprise technology. The governance framework must match that velocity.

Reference Architecture

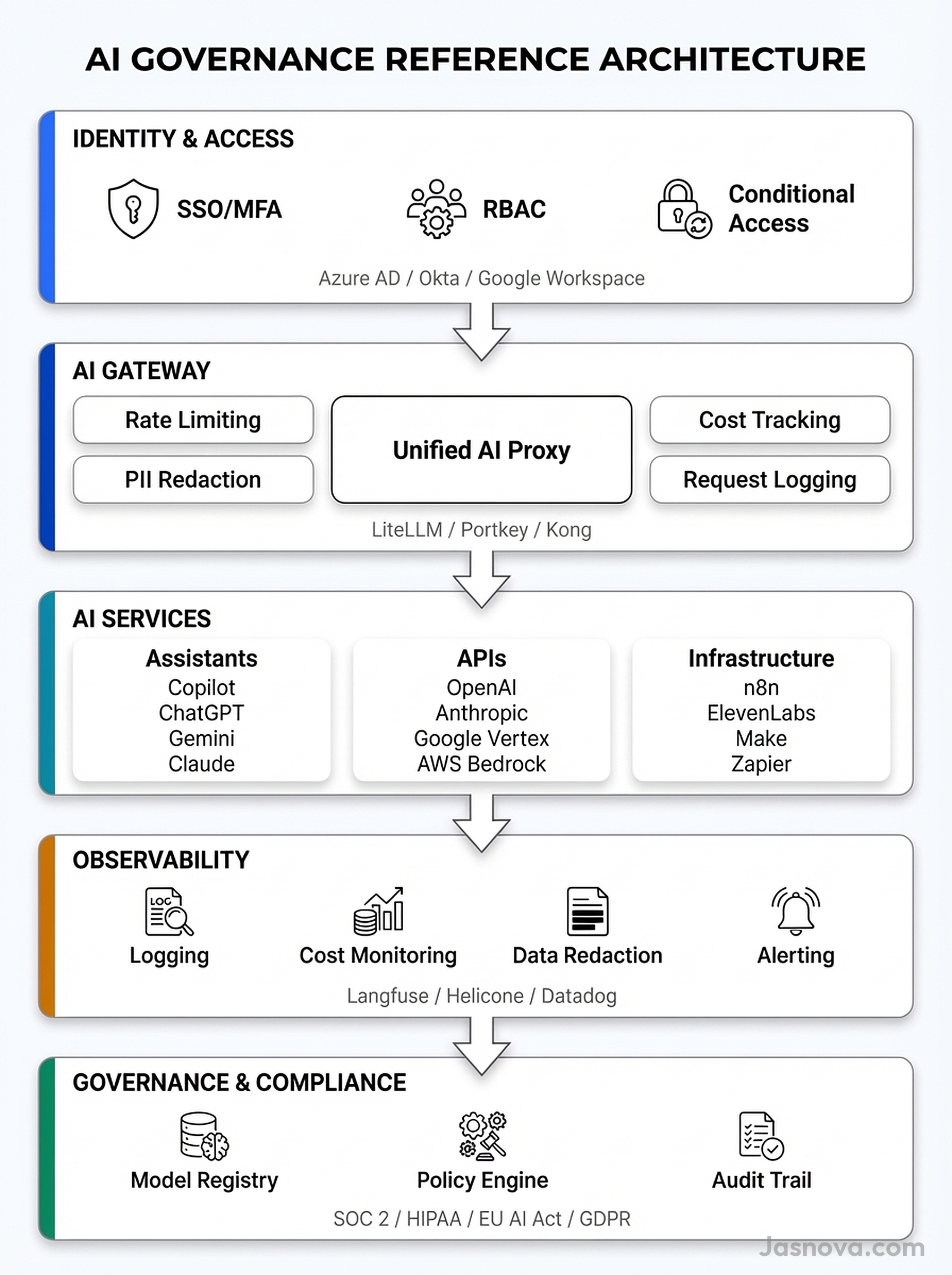

Before addressing each domain, here is how the layers connect. A practical AI governance architecture has five layers, each building on the one below it.

The Identity and Access layer controls who can reach AI services. The AI Gateway routes all programmatic access through a single control point. The AI Services layer encompasses three categories: vendor-managed assistants, direct API access, and supporting infrastructure tools. The Observability layer logs everything. The Governance and Compliance layer provides the policy engine, model registry, and audit trail that tie it all together.

The key principle: centralize the guardrails, decentralize the innovation. A central platform team operates the gateway, sets policies, and maintains the observability stack. Business units select models, build applications, and manage budgets within those guardrails. This federated model prevents the bottleneck of pure centralization while avoiding the chaos of no governance at all.

1. Access Control

Access control for AI is not new technology. It is existing identity infrastructure applied to a new category of tools. The challenge is coverage.

Every major enterprise AI tool now supports SAML/OIDC single sign-on and SCIM provisioning. Microsoft 365 Copilot ties into Entra ID with conditional access policies. GitHub Copilot Enterprise supports SAML SSO through GitHub Enterprise Cloud. ChatGPT Enterprise requires SAML SSO and supports SCIM for automated provisioning. Claude Enterprise and Google Gemini for Workspace follow the same pattern.

The standard approach is group-based licensing. Create identity groups in your directory (AI-Copilot-Users, AI-API-Developers, AI-Power-Users) and assign licenses to groups rather than individuals. This enables staged rollouts, budget attribution by department, and automatic deprovisioning when someone changes roles or leaves.

The contractor gap

Contractor and third-party access is where most organizations have no policy. Common approaches include separate tenants for contractors, B2B guest access with conditional access policies restricting AI features, and time-bound access with automated expiry. McKinsey's 2024 survey found that most organizations handled contractor AI access ad hoc. If you have a policy for employee access but not for contractors, you have half a policy.

The shadow AI problem

Access controls only work for tools you manage. The deeper problem is employees using personal accounts on consumer AI tools. Blackberry's 2024 cybersecurity survey found that 75% of organizations were implementing or considering bans on specific AI tools, yet 69% of employees admitted to using unapproved tools anyway.

Bans do not work. Providing sanctioned alternatives does. Employees use shadow AI because the official tools are unavailable, too slow to provision, or missing capabilities they need. The most effective governance strategy is making approved tools easier to use than unapproved ones. Supplement this with network-level controls: Cloud Access Security Brokers (CASBs) like Microsoft Defender for Cloud Apps, Netskope, or Zscaler can detect and block access to unauthorized AI endpoints. Browser extensions and endpoint agents from Cyberhaven or Nightfall AI can monitor data flowing to AI services.

2. Cost Monitoring and Budgeting

AI costs arrive in two forms. Per-seat licenses for AI assistants are predictable. API consumption for programmatic access is not.

Seat-based costs

Per-seat costs for major AI assistants range from $19 to $60 per user per month. Microsoft 365 Copilot runs $30/user/month. GitHub Copilot Business is $19/user/month (Enterprise is $39). ChatGPT Enterprise is approximately $60/user/month. Google Gemini for Workspace is $20-30/user/month as an add-on. These are predictable, but utilization often is not. Microsoft reported Copilot license utilization rates of 40-60% in early 2025. Unused seats are waste. Track utilization monthly and reclaim licenses from inactive users.

API consumption costs

API costs scale with usage and are harder to predict. Token pricing varies by model: GPT-4o runs approximately $2.50/$10.00 per million input/output tokens. Claude Sonnet 4 is approximately $3.00/$15.00. Claude Opus 4 is $15.00/$75.00. A development team experimenting with a new model can burn through a budget in days if there are no controls.

The AI gateway is the control point for API costs. Route all API calls through a gateway that enforces per-team and per-project budget limits. When a team hits its monthly allocation, the gateway blocks further requests rather than allowing unbounded spend. Tools like LiteLLM Proxy, Portkey, and Helicone provide budget enforcement out of the box.

Budget allocation

Three models exist. Centralized (IT holds the budget) provides tight control but slows adoption. Decentralized (each business unit manages its own spend) moves fast but risks sprawl. Federated (central team sets guardrails and allocations, business units spend within them) is emerging as the standard for organizations with 1,000+ employees. It mirrors the cloud landing zone pattern most enterprises already use for cloud infrastructure.

Gartner predicted that by 2026, 30% of enterprises would exceed their initial AI budgets by more than 50%. Build monitoring before you build applications. Dashboards should show spend by team, by model, by project, and by day. Anomaly detection should alert on unexpected spikes. Canalys found that 60% of enterprises exceeded initial AI cost estimates by 20% or more in their first year. The surprise costs came from two sources: API usage in development and testing (engineers iterating without cost awareness) and underutilized seat licenses (departments requesting licenses for entire teams when only a fraction used them).

3. Credential Security

AI API keys are high-value targets. An exposed OpenAI key gives an attacker unlimited access to run inference on your account. Multiple reported incidents in 2024-2025 involved exposed API keys being exploited for massive unauthorized inference workloads, with bills exceeding $10,000 before detection.

Where keys should live

API keys for AI services belong in a secrets manager. HashiCorp Vault, AWS Secrets Manager, Azure Key Vault, and GCP Secret Manager all support this. The keys should never appear in source code, environment variables committed to repositories, CI/CD configurations, or shared documents.

GitGuardian's 2024 State of Secrets Sprawl report found 12.8 million new secrets exposed in public GitHub repositories that year, a 28% increase over the prior year. AI API keys were among the fastest-growing categories. Lasso Security found over 1,500 exposed Hugging Face API tokens in code repositories, some with write access to major organizations' model repositories.

Key management practices

Scope keys to the minimum required permissions. OpenAI now supports project-level API keys scoped to specific models and rate limits. Anthropic supports workspace-level separation. Azure OpenAI supports managed identities that eliminate keys entirely for Azure-hosted applications. Use service accounts for machine-to-machine access. Rotate keys on a fixed schedule. Monitor for anomalous usage patterns (sudden spikes in token consumption, requests from unusual IP ranges, access to models the team does not normally use).

Where possible, eliminate keys altogether. Azure OpenAI with managed identities, Google Vertex AI with IAM-based access, and AWS Bedrock with IAM roles all support keyless authentication for cloud-hosted applications. If your application runs on the same cloud as your AI provider, use the cloud's native identity system instead of API keys.

4. Model Management

Enterprises using AI at scale end up with models scattered across teams, projects, and vendors. Without governance, you lose track of what is running where, what data each model has access to, and what happens when a vendor retires a model version.

Model inventory

Start with an inventory. Forrester found that 67% of organizations could not produce a complete list of AI models in use. The inventory should capture: model name and version, vendor, data classification level approved for use, teams using it, use cases, cost, and last evaluation date.

Approved model registry

Maintain an internal registry of approved models mapped to data classification levels. For example: GPT-4o approved for internal data, Azure OpenAI (with data processing agreements) approved for customer data, no external model approved for regulated health data without a BAA. AWS Bedrock, Azure AI Studio, and Google Vertex AI function as de facto approved registries by limiting available models to those the cloud provider has vetted. Use them as the enforcement point rather than building a separate registry.

Model deprecation tracking

AI providers regularly retire model versions. OpenAI deprecated gpt-3.5-turbo-0301 with 3-6 months notice. Anthropic retired Claude 2 models. Google deprecated PaLM 2 in favor of Gemini. If your production application depends on a specific model version, you need a process for tracking deprecation announcements and planning migrations. The AI gateway is the right place to enforce this: when a model reaches end-of-life, the gateway can route traffic to the approved replacement or reject requests with a clear error directing teams to migrate.

Shadow models

Like shadow IT, shadow model usage occurs when teams adopt models without security review. Direct API access with personal credit cards, open-source models deployed on personal cloud accounts, and new providers adopted outside procurement all create ungoverned exposure. The federated model addresses this by making it easy to request new models through the approved process and hard to bypass it through network controls and cost attribution.

5. Observability

If you cannot see what your AI systems are doing, you cannot govern them. Observability covers logging, monitoring, cost tracking, and data redaction.

What to log

For every AI interaction: the prompt text (with PII redacted), the response text (with PII redacted), the model used and its version, token counts for input and output, calculated cost, latency (time to first token and total response time), user and team attribution, session ID for multi-turn conversations, and error codes with retry counts. A 2024 Splunk/Enterprise Strategy Group survey found that only 28% of organizations logging AI interactions had comprehensive logging. The majority logged only errors or aggregate metrics.

PII and sensitive data redaction

Redaction must happen at two points. Before data reaches the AI provider (pre-send redaction) to prevent sensitive data from leaving your environment. And in stored logs (log-level redaction) to prevent sensitive data from accumulating in your observability stack.

The pattern is: Input passes through PII detection, sensitive content is redacted or replaced with tokens, the redacted input goes to the AI provider, the response passes through the same PII detection, and the redacted pair is written to log storage. Tools for this include Microsoft Presidio (open-source), AWS Comprehend PII detection, Google Cloud DLP, and Private AI. The AI gateway is the natural integration point for pre-send redaction.

Compliance requirements

Specific regulations mandate AI logging. SOC 2 requires logging of access to systems processing customer data. If AI processes customer data, interactions must be logged and auditable. HIPAA requires that protected health information in AI prompts and responses be logged and protected. The minimum necessary standard applies. A Business Associate Agreement is required with the AI provider. Azure OpenAI and Google Vertex AI offer BAAs. Most direct API providers do not.

The EU AI Act requires automatic event logging for high-risk AI systems, with minimum retention of six months. GDPR applies right of access and right to erasure to personal data in AI logs. If a customer asks what data you processed through AI systems, you need to answer that question. Data residency requirements may restrict which AI providers can be used for which data.

Observability tooling

The market for LLM observability has matured. Langfuse provides open-source tracing, scoring, and cost tracking with self-hosting support, important for regulated industries. Helicone operates as a gateway-based logger with one-line integration. Arize AI focuses on model performance monitoring and embeddings analysis. Datadog LLM Observability integrates with existing Datadog deployments. OpenLLMetry provides OpenTelemetry-compatible instrumentation that feeds into any OTEL backend (Jaeger, Grafana Tempo, Datadog). For organizations already invested in an observability stack, the OpenTelemetry approach avoids adding another tool.

6. Governing AI Assistants

Vendor-managed AI assistants like Microsoft 365 Copilot, GitHub Copilot, ChatGPT Enterprise, and Google Gemini for Workspace present a different governance challenge than API access. You do not control the model, the infrastructure, or the update cycle. Your governance surface is the admin controls the vendor provides.

Microsoft 365 Copilot

Copilot respects existing Microsoft 365 permission boundaries and Purview sensitivity labels. If a document is labeled "Confidential," Copilot will not surface it to users without clearance. SharePoint Advanced Management restricts content discovery to prevent Copilot from surfacing over-shared content. Audit logs are available through Microsoft Purview Audit (requires E5 or audit add-on). The primary risk: Copilot exposes existing over-permissioning. If users have access to SharePoint sites they should not, Copilot will surface that content. Deploy Copilot only after auditing and tightening SharePoint permissions.

GitHub Copilot

Admins can exclude specific repositories or file paths from Copilot suggestions using content exclusion policies. IP indemnity is available for Business and Enterprise customers with the duplicate detection filter enabled. Telemetry controls allow disabling code snippet collection. Organization policies control enablement at the repo level. Audit logs capture seat activity on Enterprise Cloud.

ChatGPT Enterprise and Claude Enterprise

Both require SSO, support SCIM provisioning, and commit to not training on business data. Both offer admin consoles with usage analytics and data retention controls. Both are SOC 2 Type 2 compliant. The governance posture is strong for data that stays within the platform. The risk is data that enters through copy-paste from unauthorized sources or leaves through copy-paste to unauthorized destinations.

Coding assistants

Cursor, Claude Code, and Windsurf are increasingly used by engineering teams. Enterprise controls vary. Evaluate each against your standard: SSO support, data handling commitments, admin controls, audit logging, and IP indemnity. If a tool lacks enterprise controls, it belongs in the "unapproved" column until it has them.

7. Governing Supporting Infrastructure

AI governance extends beyond models and assistants to the tools that connect them to workflows and data.

Workflow automation

Platforms like n8n, Make, and Zapier now include AI nodes that make LLM calls, query vector stores, and execute agent workflows. These tools are powerful and easy to adopt, which means they spread fast. n8n is open-source and self-hostable, which gives IT full control over data flow. Make and Zapier are cloud-hosted, meaning data passes through their infrastructure. Microsoft Power Automate integrates AI Builder and Copilot within the Microsoft 365 governance boundary.

The governance question for each tool: what data enters the AI workflow, where is it processed, and who has access to the results? Self-hosted tools (n8n) keep data within your environment. Cloud-hosted tools (Make, Zapier) require data processing agreements and security review. Apply the same procurement and security review process you use for any SaaS tool that processes business data.

AI-powered SaaS

ElevenLabs (voice synthesis), Midjourney (image generation), Runway (video), and Synthesia (AI avatars) present distinct governance challenges. Employees upload proprietary content: voice samples, brand imagery, confidential scripts. Data retention policies vary. IP ownership of generated content differs by service and pricing tier. Many of these tools lack enterprise-grade admin controls, SSO, or audit logging.

Build a procurement checklist for AI SaaS that covers: data handling and retention, training data usage (does the service train on your inputs?), hosting location and data residency, BAA and DPA availability, SSO and SCIM support, admin console and audit logging, output IP ownership, and SOC 2 or equivalent certification. Tools that fail the checklist should not be approved for business use.

What Auditors Are Asking

The Big 4 firms (Deloitte, PwC, EY, KPMG) published AI governance guidance in 2024-2025. Their audit questions map directly to the framework above.

Do you maintain an inventory of AI systems in use? (Model management.) What is your AI acceptable use policy? (Access control.) How do you control access to AI tools? (Identity and access layer.) How do you log and monitor AI interactions? (Observability.) How do you ensure sensitive data is not shared with AI providers? (PII redaction.) What is your process for evaluating and approving new AI models and tools? (Model registry.) How do you manage AI-related third-party risk? (Supporting infrastructure governance.) Do you have a process for AI incident response?

If you implement the framework described in this article, you can answer every one of those questions. If you cannot, the auditors will tell you so, and increasingly, regulators will too.

The Regulatory Floor

The EU AI Act is now partially in force. Prohibited practices and AI literacy obligations took effect February 2025. General-purpose AI obligations applied from August 2025. Full high-risk system requirements arrive August 2026. Penalties reach 35 million euros or 7% of global annual turnover.

In the US, the regulatory landscape is fragmented but growing. Colorado's AI Act requires deployers of high-risk AI to implement risk management policies and conduct impact assessments (effective 2026). Texas, California, Connecticut, and Illinois have passed or proposed AI-specific laws. Industry regulations layer on top: HIPAA for healthcare AI, SOX and FFIEC guidance for financial AI, FedRAMP for government AI procurement.

Build your governance framework to the highest common denominator. An organization that satisfies the EU AI Act's logging, human oversight, and risk assessment requirements will satisfy most other regulatory frameworks as a byproduct.

"67% of organizations could not produce a complete list of AI models in use across the organization when asked. You cannot govern what you cannot see."

Forrester Research, 2024

Implementation Roadmap

Month 1-2: Visibility

- Inventory all AI tools, models, and API keys in use across the organization

- Audit AI-related spend across all cost centers (seat licenses, API consumption, SaaS tools)

- Deploy CASB or endpoint monitoring to measure shadow AI usage

- Audit SharePoint/Drive permissions before deploying Copilot or Gemini

Month 3-4: Controls

- Deploy an AI gateway for all programmatic API access with logging, rate limiting, and budget enforcement

- Migrate all AI API keys to a secrets manager with rotation schedules

- Establish an approved model registry with data classification mappings

- Enable admin controls and audit logging on all vendor-managed AI assistants

- Implement PII redaction in the gateway pipeline

Month 5-6: Governance

- Publish an AI acceptable use policy covering all three service categories (assistants, API access, supporting tools)

- Stand up the observability stack with dashboards for cost, usage, errors, and compliance

- Establish procurement criteria for AI SaaS tools

- Define model deprecation and migration processes

- Run a tabletop AI incident response exercise

Ongoing

- Monthly review of AI spend by team and project

- Quarterly audit of model inventory and access permissions

- Annual regulatory compliance review against EU AI Act, industry-specific regulations, and emerging state laws

- Continuous monitoring of shadow AI adoption and new tool evaluations

Key Takeaways

- Only 15% of enterprises have comprehensive AI governance, yet 72% are using AI in production. The gap between adoption and governance is the central risk

- 78% of AI users bring their own tools to work. Bans do not stop shadow AI. Providing better sanctioned alternatives and network-level visibility does

- The AI gateway is the most important infrastructure investment. Route all programmatic AI access through a central proxy that enforces authentication, rate limits, budget caps, PII redaction, and logging

- 60% of enterprises exceeded initial AI cost estimates by 20% or more. Build cost monitoring before you build applications. Track spend by team, by model, and by project from day one

- AI API keys are high-value targets. 12.8 million secrets were exposed on GitHub in 2024. Store keys in a secrets manager, scope to minimum permissions, rotate on schedule, and eliminate keys entirely where cloud-native identity is available

- Observability is a compliance requirement, not an option. SOC 2, HIPAA, GDPR, and the EU AI Act all mandate logging of AI interactions. Only 28% of organizations have comprehensive logging today

- Federated governance is the right model for mid-to-large organizations: centralize the guardrails, decentralize the innovation